Observations

Business AI Issues: What it boils down to

As generative AI tools become more prevalent in the software tools that we use at work, I’ve been observing a trend toward the addition of “generative AI terms” to contracts that address the main business risks that customers perceive of AI tech. Most of these tools work by presenting a user interface that communicates on the backend with a large language model (like GPT-4) through an API (like the OpenAI or Claude APIs).

The key concerns that businesses have about vendors that offer AI-powered tools can be boiled down to the following:

1. Control over data inputs. The prompts and inputs that are given to AI tools could contain confidential, sensitive, proprietary and personal information. A typical feature that vendors are now throwing into their products to show they have some of AI capability is the ability to query a large dataset using natural language queries. For example, let’s say a vendor offers HR software that keeps personnel records. This requires the tool to ingest all the HR data. The prompts submitted by users may also contain revealing information: “If we wanted to pay severance of 1 month’s base salary to everyone in the Supercharging division, how much would that cost?”.

These inputs can be used to train AI models to improve their efficacy in general, and also specifically for the organization that is using them. AI models are a bit of a black box, even to those that have created them, so when inputs are integrated into a model, it’s not clear exactly what is happening to those inputs. However, people get nervous that their data is now floating inside that model in a form that will allow other people to access it. This has been alleged to happen before.

Businesses want to ensure that confidential data inputs remain that way, so there is a clear trend towards prohibiting vendors from using customer data to train models, unless express prior consent is obtained. “Prior consent” can be as extreme as requiring a vendor to obtain a signed document from someone senior at the customer organization before any AI model training features are enabled. However, this more often manifests in vendors releasing model training features in a disabled state, and requiring an administrator to enable it — the “opt-in approach”. This is the emerging market standard (e.g. Zoom), although not all vendors do this (e.g. Notion).

Slack was recently raked over the coals for using confidential customer data to train its AI models on an opt-out basis. See: Slack users horrified to discover messages used for AI training.

Note there is a difference between shared models and private models. A private model is an AI model that has been built or fine tuned for a specific organization and is not made available for access by any other organization. Customers are more relaxed about training these types of models, although they typically want the models to be deleted upon termination of the contract.

2. Output quality. AI still has issues with accuracy and bias. It can be confidently incorrect. It’s standard for vendors to disclaim all responsibility for use of outputs. And it’s also standard for companies to tell their team members that they need to treat AI like a junior employee and double check the work.

3. Ownership of the output. There are two aspects to this: (1) who owns the output of generative AI, and (2) is there any risk of IP infringement?

The first issue is easy: the standard is the output is owned by customer, rather than the vendor.

The second issue is the billion dollar question. Many AI models have been trained on publicly available corpuses of data on the internet. However, “publicly available” doesn’t mean “usable for any purpose” — there’s normally someone that owns the copyright in whatever appears on the internet. In many cases, the training has occurred without the consent of the copyright owner so there’s an argument that the AI model creators are engaging in mass copyright infringement. There are multiple lawsuits over this, such as some large news organizations that have sued OpenAI and Microsoft for scraping their online articles. Music publishers and book publishers are in the fray as well.

The ultimate outcome of this, I believe, is that AI model creators will cut licensing deals with major copyright owners (e.g. OpenAI and Reddit just struck a licensing deal) and just continue ripping off small copyright owners. Maybe some sort of standard industry wide licensing model will emerge if SCOTUS rules that unlicensed training of AI models on copyrighted works constitutes infringement (and is not a fair use, like the AI companies are claiming).

In any event, as users of AI models, businesses are concerned that the outputs they use will be tainted and could subject them to infringement claims. I don’t think that issue is a big concern for most businesses. Firstly, it seems that infringement would be hard to prove in a lot of cases. Secondly, the (very) deep pockets to sue here are the AI model creators, not their customers. In response, Microsoft has offered some of its business customers an IP infringement indemnity for its Copilot product, in case the customer is sued because of what Copilot spits out. I don’t think that will become a standard.

4. Legal compliance. AI laws are starting to get passed by legislatures around the world. For example, the EU has passed the AI Act. In 2019, California passed legislation that makes it illegal to pass chat bots off as human — and this was before ChatGPT was released. Both vendors and customers need to be mindful of what will be an increasing amount of regulation over the AI space.

We are so back (or not)

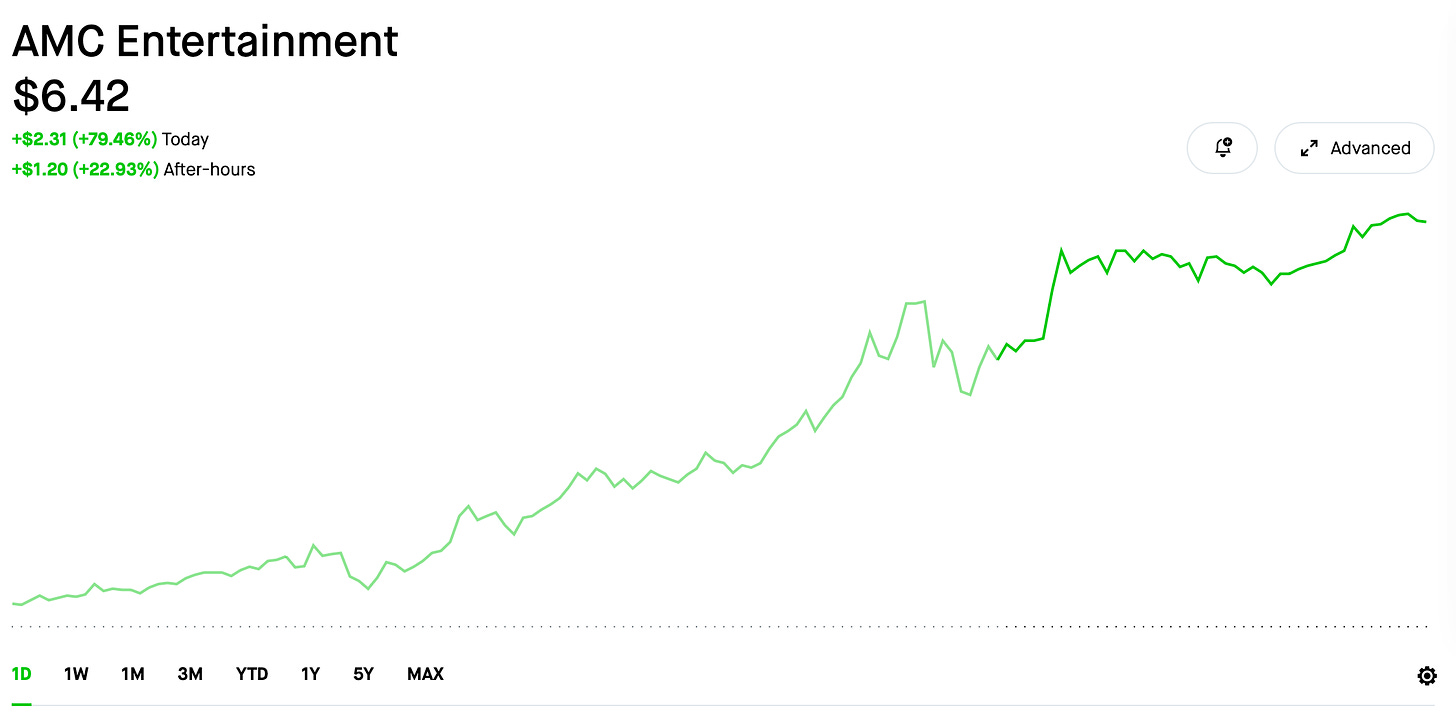

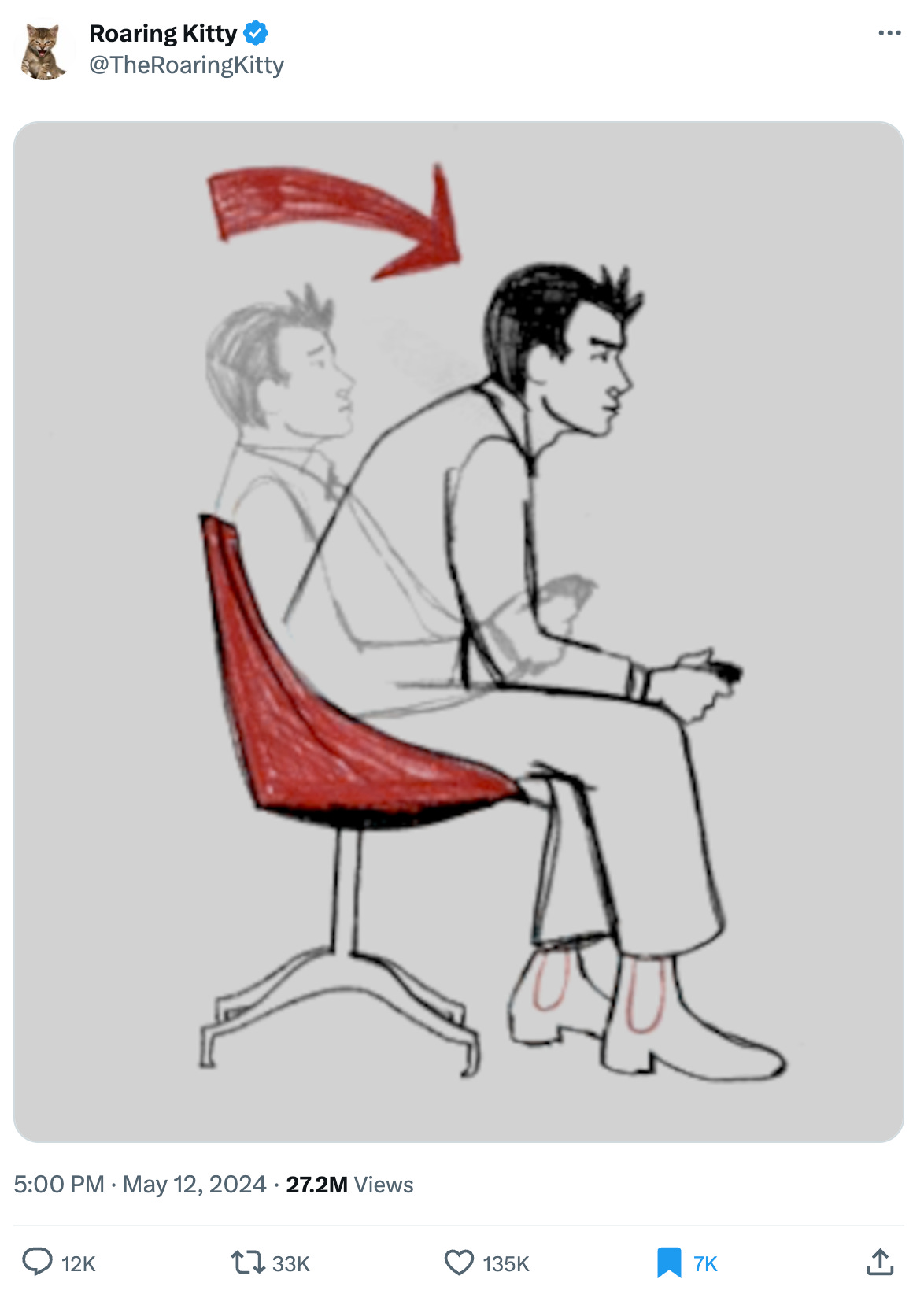

A single tweet last weekend from Roaring Kitty — better known as DeepFuckingValue on Reddit — after over 2 years of inactivity sparked another run up of Gamestop’s stock. For a few brief days, the stock partied like it was 2021. Perhaps DFV blew through his tens of millions of dollars and was looking for another score. All he had to do was load up on options on Friday. Other meme stocks like AMC also exploded upwards.

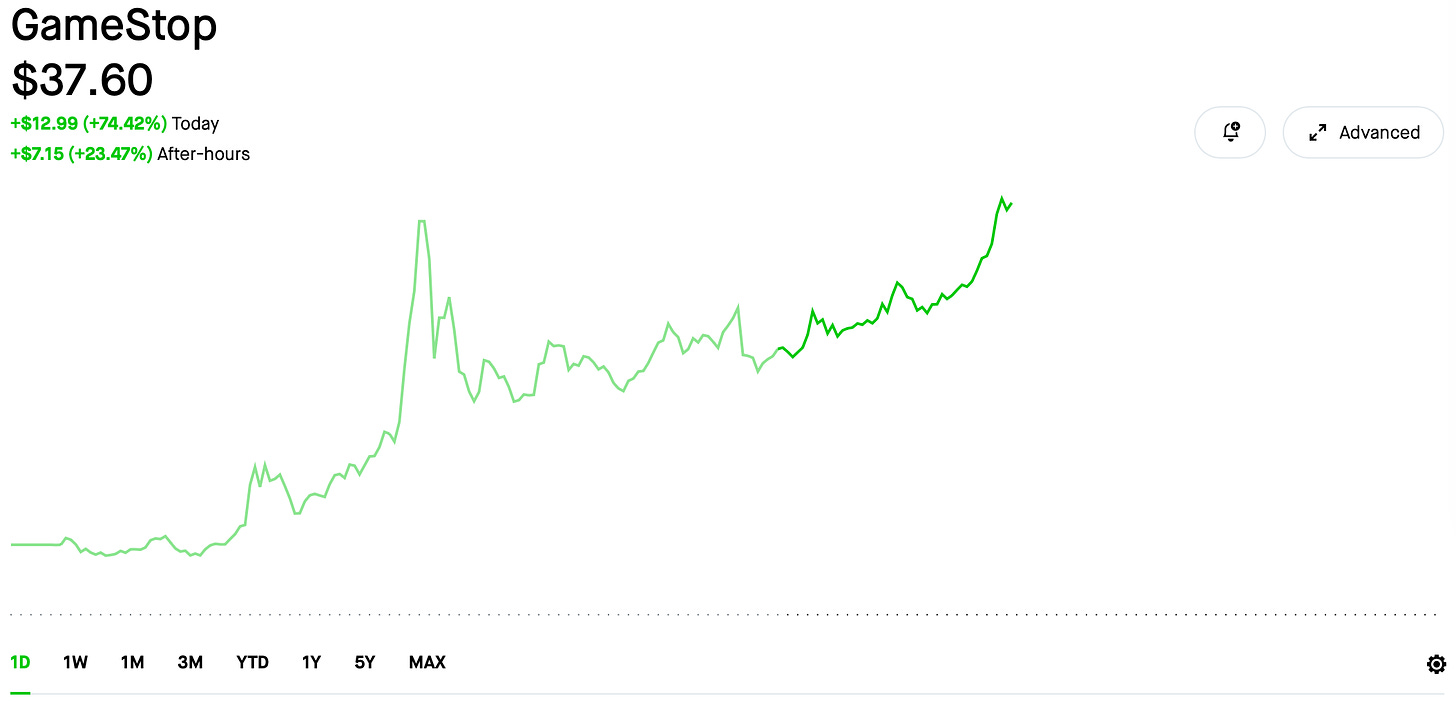

On Monday:

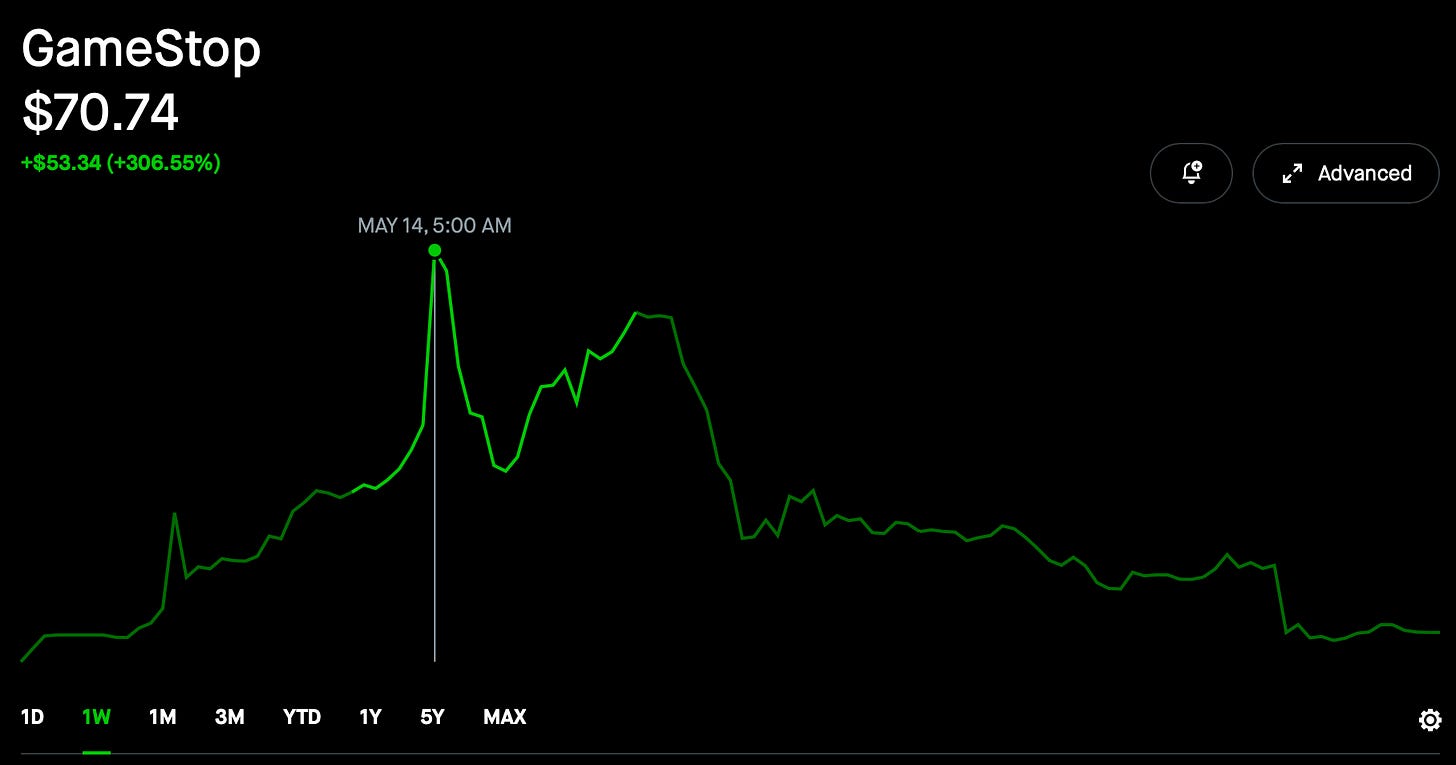

But by the end of the week, GME had fallen back down to earth. Rumor is that the hedge funds were prepared that time and managed to ride some of the wave up (especially after everyone noticed DFV’s tweet on Sunday).

Meanwhile, Trump Media & Technology Group, purveyor of Truth Social, is the new leading meme stock, with a market cap on Friday of almost $7 billion on 2023 revenue of $4 million and a net loss of $58 million.

Further Observations

Susanne is traveling for most of this week and next so I’m solo parenting. It’s a lot of work, especially on the weekends.

Elon Musk’s mercurial firing of the entire Tesla Supercharger team appears to have been a combination of pique and collective punishment.

Articles

iPhone owners say the latest iOS update is resurfacing deleted nudes (The Verge)

Also reported on Reddit.ChatGPT can talk, but OpenAI employees sure can’t (Vox)

A lot of money can override a lot of morals.The Unpunished: How Extremists Took Over Israel (New York Times)

See the most detailed map of human brain matter ever created (Popular Science)

It is eye opening to see how interconnected a single neuron is.How to win the Hermès game (The Hustle)

‘Hell hath no fury like a wealthy person being told no’: can elite shoppers really force Hermès to sell them Birkins? (The Guardian)

Fast Food Forever: How McHaters Lost the Culture War (New York Times)

The Fad Diet to End All Fad Diets (The Atlantic)

Diversions

Beautiful Aurora photos from the New York Times, The Guardian, Forbes & Buzzfeed

Charts, Images & Videos